How to Test Your Dragon: Breaking Down a Team Test Strategy for Distributed Systems, Part 1

Created by: Richard Kevin Kabiling 5min read

Oct 10, 2022

Overview

In this two-parter, we will explore and detail a functional test strategy that works for systems with distributed architectures (or dragons, as I’d like to put it). Teams may use this strategy as a jumping-off point to guide their test practice when developing larger systems composed of multiple distributed applications.

Despite Automation

DevOps Research and Assessment and Accelerate, among other publications, have described that reliable test automation is a predictor of good software quality and, consequently, strong IT performance.

As established and accepted as test automation may be, due to the large, unwieldy architectures, there’s a lot of room for discretion on what parts of the system to test and how to test them. The lack of a plan or strategy leads to inefficient test suites with no apparent purpose other than simply testing the system. This confusion is particularly prevalent in teams who are still in the process of maturing in their automation journey.

Common issues include but are not limited to:

- Long-running, expensive to write, or brittle tests

- Confusion on unit and integration tests

- Lack of direction and focus in tests

- Endless discussions on scenario overlap between test types

- Lack of delineation between test suites

Addressing these issues requires chopping and dicing the dragon wherever we can and planning appropriate tests for each part.

What are We Testing?

The effectiveness of a test strategy relies heavily on how it can navigate through and pivot around the system under test. And for that, it is best to understand what comprises an application and the distributed system that backs it.

User Interface and the Front-end

First and foremost, when interacting with the system, users generally interact with it via the front-end application’s user interface. Nowadays, this would usually be via the browser or some mobile application.

](https://files.readme.io/4bbbb85-mobile-web.png)

A wireframe, as illustrated by Balsamiq in What are Wireframes?

Depending on the architecture, this may also include a Back-end for Front-end (BFF) that provides a back-end service to the user interface that is very tailored to its needs (e.g., specific mobile application requirements, etc.). A BFF is beneficial for systems with different form factors or front-ends. Note that including it here or in further layers of the system can be a polarizing topic and is out of scope but for the benefit of the discussion, simply remember that this entirely depends on the level of coupling that exists between the exposed UI and the BFF and may vary wholly based on the system under test.

Systems and System Components

](https://files.readme.io/ae7130a-front-end-back-end-iceberg.jpeg)

Front-end and Back-end Iceberg, according to Alvin Foo

In reality, even the most straightforward user interfaces utilize larger systems built on top of systems to deliver functionality and user experience entirely. Behind these user interfaces are systems that enable a swathe of functionality like payment processing, search autocomplete, post search, ride-hailing, etc.

Within these systems live multitudes of microservices, databases, and other system components that enable less significant functionalities from simple business logic execution, orchestration, state management, batch processing, communication with third parties, notifications, etc. And together, they fulfill the system’s larger goal.

Hexagonal Architecture

Let’s zoom in further. A single system component follows an application architecture. There are various architectures an application may follow. The following are famous examples-- they have varying degrees of overlap and slightly differing focus.

- Onion

- Clean

- Hexagonal

Henceforth, we will focus on hexagonal architecture. This is an architecture I prefer because this architecture elevates the domain (see Domain Driven Design) by isolating the application into distinct parts with specific functions around the domain and core business logic. Consequently, applications that follow the hexagonal architecture tend to be very testable.

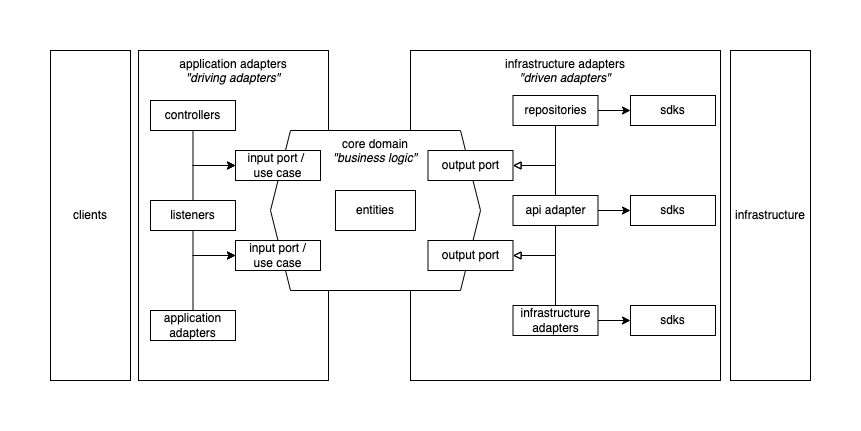

Hexagonal Architecture, Core Domain, Application, and Infrastructure Adapters

In detail, this architecture requires the following parts:

- Core domain or business logic - these contain core processing logic and business rule validation over entities

- Application or driving adapters - drives the core domain by taking input, translating then dispatching commands. These adapters utilize interfaces defined by the core domain when sending commands. Examples include controllers and queue listeners.

- Infrastructure or driven adapters - driven by the core domain and serves as a gateway to various infrastructure components such as datastores, etc. These adapters implement the interface as prescribed by the core domain.

There’s a lot of material that explores the subject. However, note that they may be using slightly varying naming conventions. What’s important is that they all express the same intention and thought.

Strategy in a High Level: Test Pyramid

as described by Mark Cohn in [Succeeding with Agile](https://www.oreilly.com/library/view/succeeding-with-agile/9780321660534/)](https://files.readme.io/1f1341b-ta-image2.png)

DevOps Research and Assessment as described by Mark Cohn in Succeeding with Agile

The DevOps Research and Assessment best describes the application of the test pyramid.

A specific design goal of an automated test suite is to find errors as early as possible. This is why faster-running unit tests run before slower-running acceptance tests, and both are run before any manual testing.

More specifically, tests found higher in the pyramid (more integrated– signified by the arrow on the left) tend to be:

- more complex test objects - test objects are composed of more integrated components that interact with each other

- more brittle - small changes tend to break more tests

- more expensive to write - tests contain a lot of orchestration and particulars involved across one or more components

- more time consuming - tests take more time to run and are therefore demanding to run repeatedly

In contrast, tests found lower in the pyramid (less integrated– signified by the arrow on the left) tend to be:

- more isolated test objects - test objects tend to be simple with no or very few simpler collaborators

- faster - runs very fast and therefore easy to run repeatedly

- more focused - tests only a tiny functionality or very few components

- more stable - tests fail less despite changes

- simpler - tests are shorter and contain very little orchestration

This concept excellently illustrates a predictable relationship between the component integration spectrum and cost. As such, it gives us a reliable structure we can follow on how we would like to structure our test strategy against the various levels (in terms of granularity or “integrated-ness”) of the system under test:

- We will have a more significant number of tests that focus on more isolated components. These tests will be fast, focused, and stable. They will be the foundation of our test automation strategy.

- We will have a smaller and smaller number of tests that focus on more and more integrated components across multiple test suites that focus on different discrete tiers of integration (i.e., system end-to-end test suite, system component test suite, etc.).

- We will have very few end-to-end tests, especially those that go through the UI.

To Follow

In the next part of the series, we will go through the strategy in detail, including the recommended approaches per test type per tier.

Contributors

Learn more about Maya!

- Head back to our Maya Tech Blog for more interesting articles

- Keep up with the latest stories of innovation from Maya Stories

- or Check us out in LinkedIn.